Jingwen He, Chao Dong, Yu Qiao

The European Conference on Computer Vision (ECCV), 2020

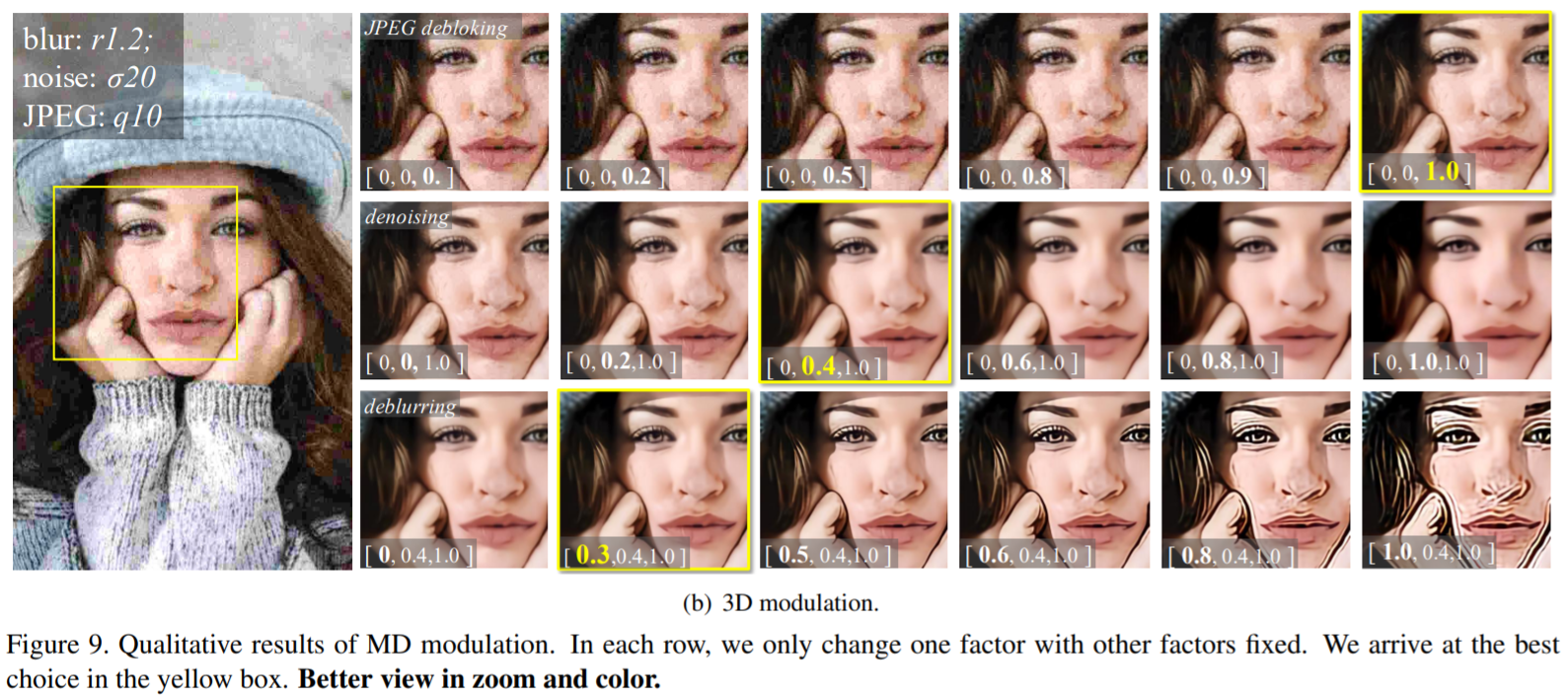

Based on the great success of deterministic learning, to interactively control the output effects has attracted increasingly attention in the image restoration field. The goal is to generate continuous restored images by adjusting a controlling coefficient. Existing methods are restricted in realizing smooth transition between two objectives, while the real input images may contain different kinds of degradations. To make a step forward, we present a new problem called multi-dimension (MD) modulation, which aims at modulating output effects across multiple degradation types and levels. Compared with the previous single-dimension (SD) modulation, the MD task has three distinct properties, namely joint modulation, zero starting point and unbalanced learning. These obstacles motivate us to propose the first MD modulation framework – CResMD with newly introduced controllable residual connections. Specifically, we add a controlling variable on the conventional residual connection to allow a weighted summation of input and residual. The exact values of these weights are generated by a condition network. We further propose a new data sampling strategy based on beta distribution to balance different degradation types and levels. With the corrupted image and the degradation information as inputs, the network could output the corresponding restored image. By tweaking the condition vector, users are free to control the output effects in MD space at test time. Extensive experiments demonstrate that the proposed CResMD could achieve excellent performance on both SD and MD modulation tasks.