RepSR: Training Efficient VGG-style Super-Resolution Networks with Structural Re-Parameterization and Batch Normalization

Xintao Wang, Chao Dong, Ying Shan

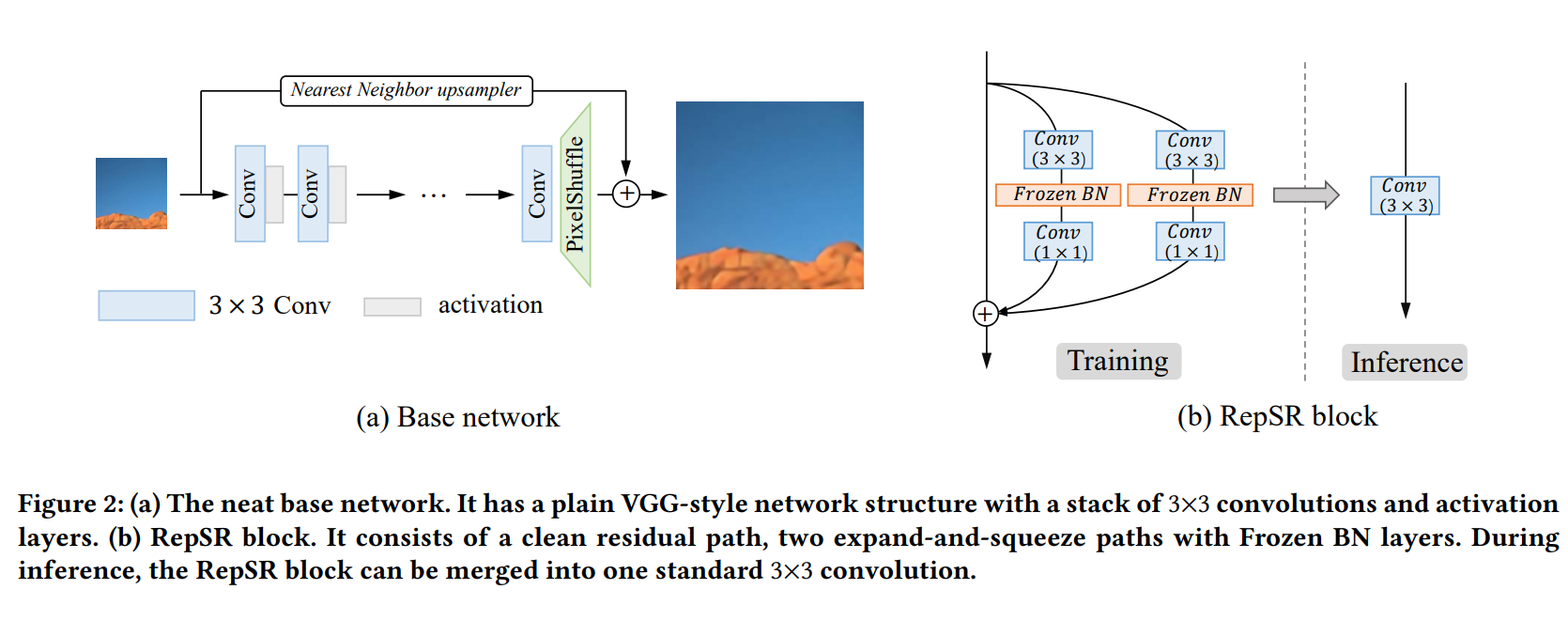

This paper explores training efficient VGG-style super-resolution (SR) networks with the structural re-parameterization technique. The general pipeline of re-parameterization is to train networks with multi-branch topology first, and then merge them into standard 3x3 convolutions for efficient inference. In this work, we revisit those primary designs and investigate essential components for re-parameterizing SR networks. First of all, we find that batch normalization (BN) is important to bring training non-linearity and improve the final performance. However, BN is typically ignored in SR, as it usually degrades the performance and introduces unpleasant artifacts. We carefully analyze the cause of BN issue and then propose a straightforward yet effective solution. In particular, we first train SR networks with mini-batch statistics as usual, and then switch to using population statistics at the later training period. While we have successfully re-introduced BN into SR, we further design a new re-parameterizable block tailored for SR, namely RepSR. It consists of a clean residual path and two expand-and-squeeze convolution paths with the modified BN. Extensive experiments demonstrate that our simple RepSR is capable of achieving superior performance to previous SR re-parameterization methods among different model sizes. In addition, our RepSR can achieve a better trade-off between performance and actual running time (throughput) than previous SR methods.